The HLS format

A technical overview of the HTTP Live Streaming (HLS) format

The HLS standard, which was invented in 2009 by Apple, is, at first glance, relative simple (in particular when compared with DASH). However, there are still a lot of variations and details that will be involved in determining whether one’s stream will be readable and playable.

Whilst one doesn’t need to know the detail of the spec to be able to create an effective streaming solution, it is beneficial to have a general understanding of the format, if only to be able to understand how a solution like broadpeak.io handles this type of stream, and troubleshoot particular scenarios.

Quick Summary

Manifest

The manifests in HLS, which are referred to as playlists, are text files following a schema called M3U8. There is one primary playlist providing the list of renditions (called variant and media streams) available (you’ll sometimes hear it referred to as “multi-variant playlist”), and additional media playlists for each of those to provide the list of segments available.

Codecs

The most widely used codecs for HLS are:

- H.264/AVC and H.265/HEVC for video streams

- AAC and Dolby Digital for audio streams

Segment Containers

- HLS has traditionally been requiring segments in Transport Stream (TS) containers with H.264/AVC.

- Since 2016 (with iOS 10), HLS has added support for fragmented MP4 (fMP4), with both H.264/AVC and H.265/HEVC (for which it is mandatory).

In the most recent versions, HLS supports CMAF containers (which are based on ISOBMFF and therefore very similar to fMP4) for streams encoded with H.264 and H.265. This makes it theoretically possible to have a single set of fragments for both HLS and DASH, a major advantage in terms of storage (and therefore storage costs), if your device supports those versions. For most applications, fMP4 segments that are not strictly CMAF can be used to achieve the same result.

Content protection

The DRM system in use is not typically directly constrained by the ABR format, but in practice you’ll often see that content in HLS will often use FairPlay, which is usually the only DRM supported on Apple devices. For other applications, Widevine is sometimes used as well.

Subtitles

HLS usually contains subtitles in WebVTT format (a format very closely associated to SRT). This can usually be done in one of two ways:

- A single “sidecar” WebVTT file with subtitles for the whole duration of the content

- A segmented format in which the subtitles are chunked into segments, in the same way as video and audio streams. WebVTT is also the typical format used, but you may sometimes find IMSC (a subset of TTML) wrapped in fMP4, though not all players may support it equally.

Thumbnails

For playback purposes, usually to provide preview thumbnails when the users hovers over a scrub bar, or to provide trick play mode on some devices, one or multiple image tracks will commonly be added to an HLS manifest.

There is a variety of possibilities in HLS, with varying degree of support in players

- I-frame playlists are the primary mechanism mandated by the HLS specification, which requires decoding the video tracks to extract the I-frames (ie., independent full frames).

- An alternative spec (pushed by Disney, Roku and WarnerMedia) uses more standard image files, provided by an Image Media Playlist.

There are other formats that may typically be used by players, such as image sprites declared in a WebVTT file, but they are usually not provided to the player through the manifest.

Manifest Format

An HLS manifest is contained in a collection of Playlist files, which are text files derived from the M3U format, and which follow a specific schema and usually carry the extension .m3u8.

A crucial point to understand is that HLS manifests contain one “main” playlist with accompanying playlists for each representation.

Playlists, Streams and Media

One of the M3U8 files is the Multivariant Playlist, which contains an index of all streams that can be used to playback the asset, with some qualitative metadata about each, such as codecs, audio channels, languages.

- For video, the Multivariant Playlist usually contains one or multiple Variant Streams. A Variant Stream is a semantically equivalent version of the same content, but with different quality levels, usually with different bitrates and resolutions.

- For audio tracks and subtitles, the Multivariant Playlist usually contains one or multiple Media. When multiple languages are available, multiple renditions are linked into Groups through metadata identifiers

- Other tracks (such as I-frame playlists) have specific elements to specify them.

For each of those, the Multivariant Playlist contains a link (technically a URI) to a separate Media Playlist (contained in a distinct M3U8 file).

Alternative TerminologyThere exists alternative terminology when referring to those concepts, depending on the specification used (see below for References)

- Since 2021, both the Pantos and Apple specs use Multivariant Playlist

- For a while, Apple used Primary Playlist.

- Previously this was commonly referred to as “Master Playlist” but the industry rightly elected to move away from this type of discriminatory term.

- The Pantos spec uses Rendition as a generic term for Variant Streams and Media Streams. Technically renditions should be different from one another (eg. different camera angles or different languages), whereas Variants are all the same content but with different technical specs.

Multivariant Playlist

Here is an example of a Multivariant playlist with 2 video streams and 1 audio media. Refer to the section below to understand the tags being used.

#EXTM3U

#EXT-X-VERSION:6

# Audio

#EXT-X-MEDIA:TYPE=AUDIO,GROUP-ID="AUDIO",NAME="English",LANGUAGE="en",URI="audio_en.m3u8"

# Video

#EXT-X-STREAM-INF:BANDWIDTH=4364913,AVERAGE-BANDWIDTH=4277405,CODECS="avc1.4D4028,mp4a.40.2",RESOLUTION=1920x1080,AUDIO="AUDIO",FRAME-RATE=24

video/video_1080p.m3u8

#EXT-X-STREAM-INF:BANDWIDTH=714803,AVERAGE-BANDWIDTH=696753,CODECS="avc1.4D401E,mp4a.40.2",RESOLUTION=640x360,AUDIO="AUDIO",FRAME-RATE=24

video/video_360p.m3u8Descriptive Tags

From the examples above you can see that a playlist is essentially a text file in which each line is either a URI or a line that starts with #. Those are called descriptive tags.

The principal tags are as follows:

EXTM3U: Indicates that the file is a playlist. Every playlist must start with this tag.EXT-X-VERSION: Indicates what version of the protocol the playlist adheres to (and through that, what features it supports)EXT-X-STREAM-INF: Describes an available Variant Stream. The URI to the corresponding Media Playlist is the next line in the file.EXT-X-MEDIA: Describes an available Media, typically audio or subtitles. The URI to the corresponding Media Playlist is in theURIparameter.

Any line starting with # but not recognised is considered to be just a comment.

A more advanced example below shows multiple audio streams, i-frame playlists, and subtitles

#EXTM3U

#EXT-X-VERSION:7

# Audio

#EXT-X-MEDIA:TYPE=AUDIO,GROUP-ID="audio",LANGUAGE="en",NAME="English",DEFAULT=YES,AUTOSELECT=YES,URI="audio_en.m3u8"

#EXT-X-MEDIA:TYPE=AUDIO,GROUP-ID="audio",LANGUAGE="fr",NAME="Français",DEFAULT=NO,AUTOSELECT=NO,URI="audio_fr.m3u8"

# Subtitles

#EXT-X-MEDIA:TYPE=SUBTITLES,GROUP-ID="subs",NAME="English",DEFAULT=YES,AUTOSELECT=YES,LANGUAGE="en",URI="subs_en.m3u8"

#EXT-X-MEDIA:TYPE=SUBTITLES,GROUP-ID="subs",NAME="Français",DEFAULT=NO,AUTOSELECT=NO,LANGUAGE="fr",URI="subs_fr.m3u8"

# Video

#EXT-X-STREAM-INF:BANDWIDTH=800000,RESOLUTION=640x480,CODECS="avc1.4d401e,mp4a.40.2",AUDIO="audio",SUBTITLES="subs",CLOSED-CAPTIONS=NONE

video_640x480.m3u8

#EXT-X-STREAM-INF:BANDWIDTH=1400000,RESOLUTION=1280x720,CODECS="avc1.4d401f,mp4a.40.2",AUDIO="audio",SUBTITLES="subs",CLOSED-CAPTIONS=NONE

video_1280x720.m3u8

# Images

#EXT-X-I-FRAME-STREAM-INF:BANDWIDTH=150000,RESOLUTION=640x480,CODECS="avc1.4d401e",URI="iframes_640x480.m3u8"

#EXT-X-I-FRAME-STREAM-INF:BANDWIDTH=240000,RESOLUTION=1280x720,CODECS="avc1.4d401f",URI="iframes_1280x720.m3u8"EXT-X-I-FRAME-STREAM-INF: Describes an available image track in I-frame playlist format. The URI to the corresponding Media Playlist is in theURIparameter.

Media Playlist

Each Media Playlist contains a list of the (Media) Segments that make up the stream.

Here is an example of Media Playlist for a video stream (Asset)

#EXTM3U

#EXT-X-PLAYLIST-TYPE:VOD

#EXT-X-VERSION:7

#EXT-X-MEDIA-SEQUENCE:0

#EXT-X-TARGETDURATION:4

#EXTINF:4.004,

video/4000000/segment-0.ts

#EXTINF:4.004,

video/4000000/segment-1.ts

#EXTINF:2.002,

video/4000000/segment-2.ts

...

#EXT-X-ENDLISTDescriptive Tags

EXTM3U: Just as with a Multivariant Playlist, this indicates that the file is a playlist. Every playlist must start with this tag, regardless of the playlist type.EXT-X-VERSION: Indicates what version of the protocol the playlist adheres to (and through that, what features it supports). Note that the version of a Media Playlist does not necessarily have to match the version of the Multivariant Playlist that links to it, although it is common.EXT-X-PLAYLIST-TYPE– Determines the playlist type - VOD or EVENT. If VOD is stated, then the playlist is immutable. If EVENT is stated, the playlist may be appended to over time, as is needed for live streams.EXT-X-TARGETDURATION: The maximum duration of any media segment in the playlist (in seconds).EXTINF: Duration of an individual media segment (in seconds), the URI to which follows on the next lineEXT-X-ENDLIST: Indicates that the list of segments ends there. This will only happen in VOD playlists.

Other Tags

In more complex scenarios, other tags may be found in amongst the list of segments. Here is such an example:

#EXTM3U

#EXT-X-VERSION:6

#EXT-X-TARGETDURATION:8

#EXT-X-MEDIA-SEQUENCE:983759

#EXT-X-KEY:METHOD=SAMPLE-AES,URI="skd://yourkeyid",KEYFORMAT="com.apple.streamingkeydelivery",KEYFORMATVERSIONS="1",IV=0x73fbe3277bdf0bfc5217125bde4ca589

#EXT-X-MAP:URI="../video_1_init.mp4"

#EXT-X-DISCONTINUITY-SEQUENCE:6

#EXT-X-DATERANGE:ID="417295",START-DATE="2023-07-06T11:05:51.000Z",PLANNED-DURATION=15.000,SCTE35-OUT=0xFC305E0000B601001800FFF00506FEBF498D80004802144355454900065E0F7FFF00002932E00000300E10021F4355454900065EFF7FBF0C10414446520133F10134B04F065E060220020000020F4355454900065E0E7FBF0000310D104E52E7B7

#EXT-X-PROGRAM-DATE-TIME:2023-07-06T11:05:35.000Z

#EXTINF:8.000,

../video_1_983759.mp4

#EXTINF:8.000,

../video_1_983760.mp4

#EXT-X-DISCONTINUITY

#EXT-X-CUE-OUT:30.0

#EXT-X-PROGRAM-DATE-TIME:2023-07-06T11:05:51.000Z

#EXTINF:8.000,

../video_1_983761.mp4

#EXTINF:7.000,

../video_1_983762.mp4

#EXT-X-CUE-IN

#EXT-X-DISCONTINUITY

#EXTINF:8.000,

../video_1_983761.mp4

#EXTINF:6.440,

../video_1_983762.mp4EXT-X-KEYspecifies that the content is protected by DRM and provides information on how to decrypt the content.EXT-X-MAPspecifies where to find the file containing the stream initialisation for all segments that follow it. Stream initialisation is needed when using fragmented MP4 containers.EXT-X-PROGRAM-DATE-TIMEmaps the next segment in the file to an absolute date and time. This is general used for live streams and can be critical in some applications, such as ad insertion.EXT-X-DATERANGEdefines a date range with a starting point and an end point or duration. It then often carries some metadata such as a SCTE35 payload, which defines the role of the range. This is often used in live streams to define where ads are located in the original stream. You will often see alternative markers instead of or in addition to it, such asEXT-X-CUE-OUTandEXT-X-CUE-INto mark the boundaries of the rangeEXT-OATCLS-SCTE35to carry the SCTE35 information

EXT-X-DISCONTINUITYindicates that there is a potential significant change of characteristic between the next segment and the one that precedes it. This could be because of different encoding parameters. Its presence informs the player that it may have to decode the following segment(s) in a different way. It is almost always found in manifests that are manipulated such as when inserting ad segments in the middle of a stream.

Absolute and Relative URIs

The Multivariant Playlists contain paths (technically URIs) to Media Playlists. In the Media Playlists, paths are also used to refer to the Media Segments.

HLS supports for relative URIs (ie. the location of those playlists and segments is defined relative to the location of the playlist that contains the URIs), or absolute (ie. the full path, usually to an HTTP web server).

Relative and absolute paths can also be mixed

Examples

All examples so far in this page have used relative paths.

Below are examples of Multivariant and Media playlists using absolute paths:

#EXTM3U

#EXT-X-VERSION:6

# Audio

#EXT-X-MEDIA:TYPE=AUDIO,GROUP-ID="AUDIO",NAME="English",LANGUAGE="en",URI="https://myorigin.mydomain.video/asset1/audio_en.m3u8"

# Video

#EXT-X-STREAM-INF:BANDWIDTH=4364913,AVERAGE-BANDWIDTH=4277405,CODECS="avc1.4D4028,mp4a.40.2",RESOLUTION=1920x1080,AUDIO="AUDIO",FRAME-RATE=24

https://myorigin.mydomain.video/asset1/video_1080p.m3u8

#EXT-X-STREAM-INF:BANDWIDTH=714803,AVERAGE-BANDWIDTH=696753,CODECS="avc1.4D401E,mp4a.40.2",RESOLUTION=640x360,AUDIO="AUDIO",FRAME-RATE=24

https://myorigin.mydomain.video/asset1/video_360p.m3u8#EXTM3U

#EXT-X-PLAYLIST-TYPE:VOD

#EXT-X-VERSION:7

#EXT-X-MEDIA-SEQUENCE:0

#EXT-X-TARGETDURATION:4

#EXTINF:4.004,

https://myorigin.mydomain.video/asset1/video/4000000/segment-0.ts

#EXTINF:4.004,

https://myorigin.mydomain.video/asset1/video/4000000/segment-1.ts

#EXTINF:2.002,

https://myorigin.mydomain.video/asset1/video/4000000/segment-2.ts

...Audio Rendition Groups

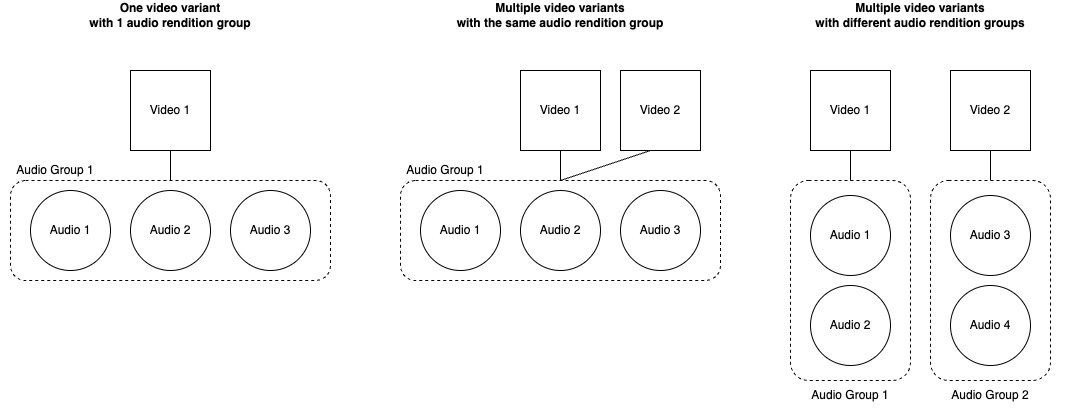

HLS provides the ability to link video tracks with sets of audio tracks, to indicate to the player that these are supposed to be played together. This grouping is known as "audio rendition groups".

The link between video Variant and associated Media is established by using the same value in the AUDIO attribute of the #EXT-X-STREAM-INF and the GROUP-ID attribute of the one or multiple associated #EXT-X-MEDIA

Subtitle GroupsA similar mechanism is used to groups subtitles with video tracks

References

The information above only scratches the surface of what the HLS specification(s) offer, but for common use cases, understanding this should be sufficient.

For more details on the structure of HLS, please refer to the Pantos RFC8216 specification.

Apple provide their own documentation, which is more specific to what their own devices support:

For a list of the different playlist versions and HLS features they support, check About the EXT-X-VERSION tag

Updated 9 months ago