Adaptive streaming

Or why modern streaming rocks!

Digital media (or “multimedia” as it used to be called) has been around for a while, and as a result there is a great variety of media formats (video, audio, and ancillary data) out there. Running the risk of being over-simplistic, there are generally 2 types of delivery formats that could be used in delivery of digital media:

- File-based, usually used for VOD. The whole media is contained in a file that can be stored, shared and needs to be downloaded (possibly in its entirety) to be played back

- Stream-based, usually used for Live. The media is delivered gradually and can be played as it is delivered

A key development in the history of delivery formats was the advent in the early 2000s of adaptive streaming (also known as adaptive bitrate streaming, or ABR streaming), a set of technological principles that allows delivering video to the users, in the most efficient way possible and at the highest usable quality for each specific user, using standard HTTP mechanisms (also used for delivery of other web content such as webpages).

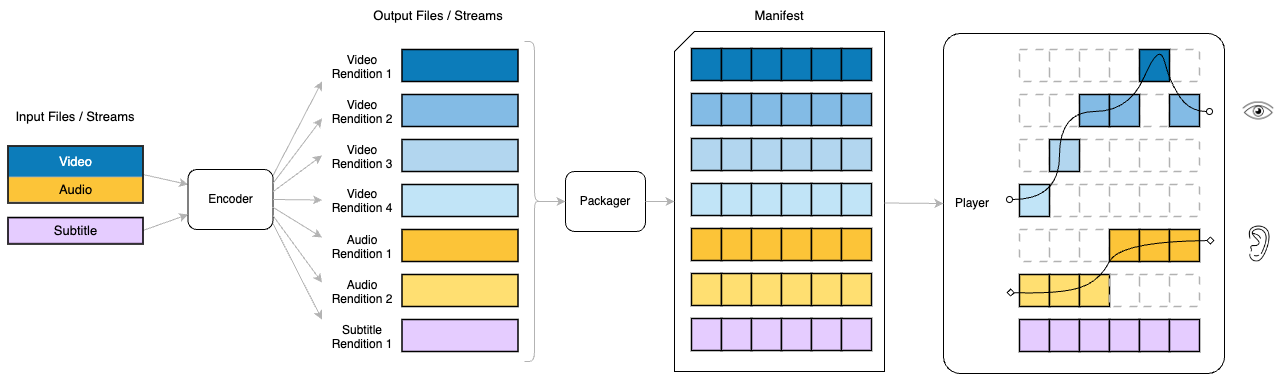

In practice, with the formats commonly used today, it works in the following way:

- The encoder takes the input, and generates multiple renditions of it (often referred to as representations), at different quality levels: usually different bitrates and resolutions (for video). The collection of those renditions is often referred informally to as the ladder (although you’ll often hear profile, a very ambiguous term in an industry in which it’s already over-used).

- The packager then segments those streams into small media files (called segments or fragments)

- The packager also generates a text document (called a manifest or a playlist, depending on the format used) that lists the representations with description metadata about them, and a definition of the segments available.

- At playback time, the player reads the manifest, and then uses information about the playback environment (such as device capability, resolution, available bandwidth, state of the buffer, etc) to choose what streams to play.

Crucially, and thanks to the small size of the segments, the player can regularly switch to different streams to “adapt” to changes in the playback conditions, such as internet speed and bandwidth availability, in order to always deliver the highest possible quality without causing stalling or re-buffering.

Example

A ladder could for example have the following video and audio renditions. Note that this is not a recommendation, just an example.

| Rendition # | Resolution | Bitrate | Aim |

|---|---|---|---|

| Video 1 | 426 x 240 | 250 Kbps | lowest quality for devices with poor bandwidth |

| Video 2 | 640 x 360 | 500 Kbps | |

| Video 3 | 960 x 540 | 1 Mbps | |

| Video 4 | 1280 x 720 | 2 Mbps | |

| Video 5 | 1920 x 1080 | 4 Mbps | highest visual quality for devices with plenty of bandwidth |

| Audio 1 | 64 Kbps | lowest quality audio | |

| Audio 2 | 192 Kbps | maximum quality audio |

ABR Formats

The most common formats used by the majority of streaming services are:

- HLS - short for HTTP Live Streaming - although don’t be confused by that, it can be used for both Live and VOD.

- MPEG-DASH (or DASH in short) - stands for Dynamic Adaptive Streaming over HTTP.

At a high level, both these formats offer the same functionality, but with technical differences in the way they are implemented. The main reason why there are 2 formats, and why you are likely to need both in your streaming service, is compatibility with the devices you want to cover.

- HLS is a proprietary format that is commonly required on all Apple devices and browsers: iOS, tvOS, Safari browser.

- DASH is a standard format that is commonly used with devices and browsers in the Google family: Android devices, Chrome browsers

Other devices and browsers (for example Smart TVs, Roku streamers) will often support one or both of those (even if partially). However, the boundaries are in reality much more fuzzy than this, and therefore, based on your use case and chosen clients, you may be able to limit yourself to a single format, even when targeting a wide range of clients.

Both standards offer a huge amount of functionality and flexibility, and have been subject to changes over the years in terms of what they can support and how they are recommended to be used.

For some technical information about both formats, you can check the sections on the HLS Format and the DASH Format

Manifest Manipulation

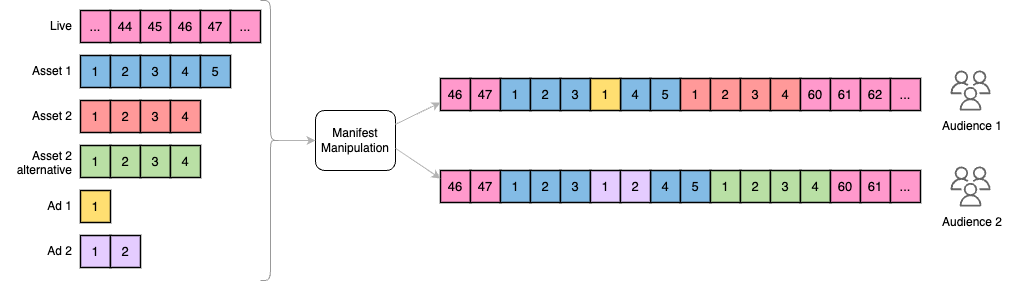

Alongside the ability to adapt playback of a media to different qualities based on available bandwidth, ABR streaming also opens the door to some pretty interesting uses. Since the design of the streaming protocols uses a manifest file that indicates (among other things) where to find the many segments that compose the stream or streams it contains, it therefore makes it possible to make up manifests that refer to segments coming from different sources.

This process is often referred to as “manifest manipulation” and this flexibility allows for a wide range of application.

- Content replacement and personalization: the manifest file can be manipulated to alter the sequence of segments, and replacement of segments with others, such as when delivering personalized content to individual users, show different content to different regions, or perform A/B testing.

- Virtual channels: A manifest can be made from multiple other assets, stitched together into a live-like experience.

- Ad Insertion: The fact that the manifest file dictates where to fetch segments from means that it can be manipulated to replace content segments with ad segments. This enables dynamic ad insertion (DAI), where ads are seamlessly inserted into the content stream on the server-side (see Video Advertising for more)

- Time-shifting Features: Features like Start-over (allowing users to watch a live event from the beginning), Catch-up TV (watching past live events), and time-shifted TV (pausing and resuming live broadcasts) are made possible by manipulating the manifest file to reference the appropriate segments.

It goes without saying that since all these applications use the same mechanism, they can also be combined.

Since manifest manipulation techniques underpin the

<<productName>>solution, you will read a lot more about it in later chapters, in particular Manifest manipulation

Updated 9 months ago